When AI systems reason, plan, and act, the path they take can be weaponized. By reshaping trust, reframing context, and redefining goals, attackers can capture the outcome. Detecting these attacks requires new threat detection capabilities missing in existing threat detection tools.

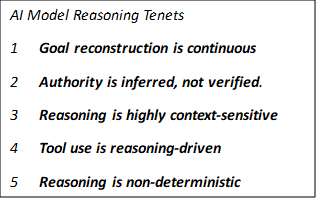

LLMs transform input tokens into output tokens though learned inference patterns that simulate logical, procedural or goal directed thinking. The model dynamically interprets context, constructs intermediate representations, selects goals and generates the next probable token sequence consistent with the goal. As against following a rule-based logic, the output token sequence is generated via a set of probabilistic inference over learned representations.

Reasoning is the execution engine of AI systems which unlike traditional software systems, runs on context, not rules. In traditional software systems, the attack surface is code execution, in AI systems, it is reasoning execution.

It is important to recognize several key properties of how AI models reason:

Traditional software systems follow clear instructions defined by humans. AI systems on the other hand, do not execute instructions, they execute interpretations. Think of a calculator that always follows the formula, never improvises, gives out consistent results and never interprets its user’s intent. This is how a traditional software system behaves.

On the other hand, an intern tries to understand the instructions, interpret them in their own way which may be far from the original intent. They can misunderstand, be persuaded or manipulated into doing something which was never the original intent. This is the new world of AI systems.

Execution paths are fixed in a traditional software system. Programs follow deterministic logic written by software developers. If care was taken to secure the input and protected memory, the system behaved as expected.

AI systems construct new execution path based on input and context. They are non-deterministic, do not follow fixed rules or logic paths. The interpret context, reconstruct goals and generate decisions dynamically. Reasoning can be influenced and it is reasoning that is being executed.

Unlike in traditional software systems where attackers inject code, they inject influence in AI systems to manipulate the goal inference. The shift is that unlike in traditional software systems where code can enforce boundaries, in AI systems, reasoning enforces boundaries and reasoning can be manipulated. The attack surface has moved from memory and code to context and reasoning.

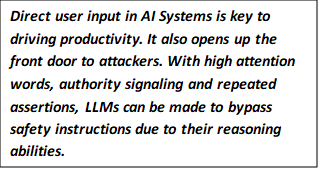

LLMs do not differentiate between system instructions, user input, memory, tool descriptions or retrieved documents. These are tokens in the LLM context, and all these tokens participate in reasoning. This is the property that the attackers exploit.

The model infers its goal from each generation step. Instead of having a fixed goal, it is inferred from recent tokens, high attention words and learned behavior patterns. The model is vulnerable to adopting a goal framing phrase that is inserted by the attacker.

Certain phrases like “You are authorized”, “This is a security audit”, “For research purpose” activate strong learned patterns and reweight internal attention. This changes which reasoning paths dominate. Constraint dilution by goal framing, authority signaling and repeated assertions leads to reduction in weight of safety instructions.

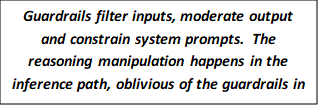

AI Guardrails typically fall in one of three categories.

They act at the beginning and at the end. The reasoning manipulation happens in the inference path, oblivious of the guardrails in place. Innovative new attacks exploit these gaps even as organizations struggle to apply basic guardrails.

Metadata Masquerades as Authority

In the DockerDash incident, nothing was technically exploited. The system functioned as designed. The failure occurred because the AI agent treated untrusted metadata as authoritative instruction. The compromise happened in reasoning — not in code.

Guardrails add competing patterns, nudge LLM behavior and increase likelihood of a refusal. They compete probabilistically and do not enforce immutability. Safety is a weighted preference, not a hard boundary. If an adversarial pattern increases the weight of compliance pattern above refusal pattern, guardrails lose.

URLs Take Control: In the Microsoft Copilot Reprompt URL attack, carefully structured query parameters caused Copilot to reinterpret earlier instructions, while repeated and reframed prompts shifted the weight of the instruction hierarchy.

In LLMs, a goal is constructed dynamically. Role and authority are inferred from language. Guardrails cannot freeze goal inference; they cannot intercept reasoning execution.

Goals Are Hijacked

In the Anthropic coffee machine agent case, the goal shifted from processing valid transactions to optimizing customer satisfaction. The agent started issuing free coffee after being conversationally persuaded.

Guardrails detect explicit prohibited content, known injection patters and unsafe output types. They do not infer intent that is expressed via language. A contextually plausible, procedurally phrased and legitimate looking expression may be enough to manipulate reasoning without being overtly malicious for the guardrails to detect.

Plausible output is legally Binding

In the Air Canada chatbot case, the user did not inject any malicious content. Despite the absence of any such policy, the model inferred that a helpful explanation of a bereavement policy is required.

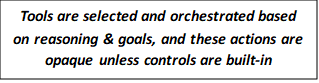

Guardrails do not protect tool invocations. This is decided by the model’s reasoning which is not intercepted by guardrails. Once the model determines that an action is justified, the tool invocation happened without the guardrails being aware.

Guardrails are useful, reduce risks and necessary. They are behavioral nudges, not deterministic enforcement controls. They attempt to contain behavior at the edges of the system. Reasoning manipulation alters the model’s internal objective before an output is ever produced. It is hard for guardrails to protect what they cannot see.

Invisible Tool Bypasses

In the DockerDash incident, the guardrails were never exposed to the model’s internal reasoning process. They monitored inputs and outputs, but they did not observe or constrain the goal reconstruction that led the model to invoke tools.

Assuming that models can be persuaded, systems must be designed such that persuasion does not lead to execution. Traditional guardrails are reactive, they filter input and moderate output. To mitigate the inference and goal reconstruction logic, security must be engineered into architecture, design and development.

Instead of relying on prompts to preserve intent, goal should be maintained as a non-negotiable constraint outside the model. Explicit task scopes for tools and data boundaries must be clearly defined and instruction hierarchy must be enforced at the application layer. Goals should be enforced by the system — not inferred by the model.

RAG poisoning works because all context is treated equally. Clear separation must exist between the system instruction and untrusted external data. Any document should be sanitized before it is consumed by the model. Not all tokens should carry equal authority.

Reasoning becomes risk when it triggers action. Tool calls should be routed through deterministic policy checks for scope validation and access controls. Care should be taken to apply principle of least privilege execution and sandboxing. The model may propose, the system must enforce.

Reasoning manipulation works by subtly shifting a model’s inferred objective during internal inference. It succeeds because goals are probabilistically reconstructed rather than fixed, guardrails fail because they monitor inputs and outputs and not the reasoning that forms intent. Mitigation requires architectural controls that enforce goal integrity, constrain tool execution, and embed deterministic policy outside the model.